Java EE 7已于6月中旬正式发布,新版本提供了一个强大、完整、全面的堆栈来帮助开发者构建企业和Web应用程序——为构建HTML5动态可伸缩应用程序提供了支持,并新增大量规范和特性来提高开发人员的生产力以及满足企业最苛刻的需求。

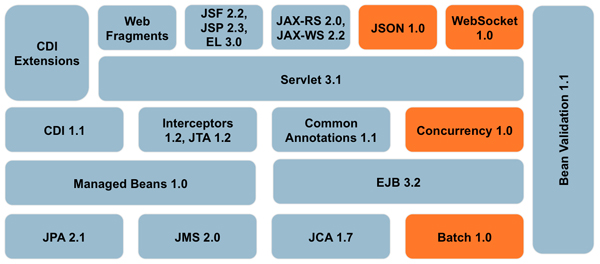

下面的这个图表包含了Java EE 7中的各种组件。橙色部分为Java EE中新添加的组件。

尽管新的平台包含了诸多新的特性,但是开发者对此似乎并不满足,尽管他们中的大部分还没有迁移到Java EE 7(或许是由于Java EE 7的特性还不完善),但是这并不影响他们对于Java EE 8特性的设想。

比如,在Java EE 6发布(2009年12月10日发布)后,开发者Antonio Goncalves认为该版本并没有解决一些问题,因此写了一个希望在Java EE 7中包含的特性清单。有趣的是,他写的4个特性中,其中有2个(flows和batch)已经包含在Java EE 7中了,而第3个特性(caching)原本也计划包含其中,但由于开发进度关系,在Java EE 7最终发布前被舍弃。

此举促使开发者Arjan Tijms也写了一个他希望在Java EE 8中出现的特性清单,如下。

- 无处不在的CDI(Contexts and Dependency Injection for Java EE,上下文与依赖注入)

- 更深入的Pruning(修剪)和Deprecating(弃用)

- 一个标准的缓存API

- administrative objects(管理对象)的应用内替代品

- 综合的现代化的安全框架

- 平台范围内的配置

下面就来详细阐述这些特性的必要性。

1. 无处不在的CDI

实际上这意味着2种不同的东西:使CDI可以用在目前不能用的其他地方、基于CDI来实现和改造其他规范中的相关技术。

a. 使CDI可以用在其他地方

与Java EE 6相比,Java EE 7中的CDI的适用范围已经扩大了很多,比如CDI注入现在可以工作在大多数JSF组件(artifacts)中,比如基于bean validation的约束验证器。不幸的是,只是大部分JSF组件,并非所有的,比如转换器和验证器就不行,尽管OmniFaces 1.6将支持这些特性,但最好是在Java EE 7中能够开箱即用。

此外,Java EE 7中的CDI也没有考虑到JASPIC组件,在此之中注入操作将无法工作。即使http请求和会话在Servlet Profile SAM中可用,但是当SAM被调用时,相应的CDI作用域也不会被建立。这意味着它甚至不能通过bean管理器以编程方式来检索请求或会话bean作用域。

还有一种特殊情况是,各种各样的平台artifacts可以通过一些替代的注解(如@PersistenceUnit)来注入,但早期的注入注解(@Resource)仍然需要做很多事情,比如DataSource。即使Java EE 7中引入了artifacts(如任务调度服务),但也不得不通过“古老”的@Resource来注入,而不是通过@Inject。

b. 基于CDI来实现和改造其他规范中的相关技术

CDI绝对不应该只专注于在其他规范中已经解决的那些问题,其他规范还可以在CDI之上来实现它们各自的功能,这意味着它们可以作为CDI扩展。以Java EE 7中的JSF 2.2为例,该规范中的兼容CDI的视图作用域可作为CDI扩展来使用,并且其新的flow作用域也可被立即实现为CDI扩展。

此外,JTA 1.2现在也提供了一个CDI扩展,其可以声明式地应用到CDI托管的bean中。此前EJB也提供了类似的功能,其背后技术也使用到了JTA,但是声明部分还是基于EJB规范。在这种情况下,可以通过JTA来直接处理其自身的声明性事务,但是这需要在CDI之上进行。

尽管从EJB 3版本开始,EJB beans已经非常简单易用了,同时还相当强大,但问题是:CDI中已经提供了组件模型,EJB beans只是另一个替代品。无论各种EJB bean类型有多么实用,但是一个平台上有2个组件模型,容易让用户甚至是规范实现者混淆。通过CDI组件模型,你可以选择需要的功能,或者混合使用,并且每个注解提供了额外的功能。而EJB是一个“一体”模式,在一个单一的注解中定义了特定的bean类型,它们之间可以很好地协同工作。你可以禁用部分不想使用的功能。例如,你可以关闭bean类型中提供的事务支持,或者禁用@Stateful beans中的passivation,或者禁用@Singleton beans中的容器管理锁。

如果EJB被当做CDI的一组扩展来进行改造,可能最终会更好。这样就会只有一个组件模型,并且具有同样有效的功能。

这意味着EJB的服务,如计时器服务(@Schedule、@TimeOut )、@Asynchronous、 @Lock、@Startup/@DependsOn和@RolesAllowed都应该能与CDI托管的bean一起工作。此外,现有EJB bean类型提供的隐式功能也应该被分解成可单独使用的功能。比如可以通过@Pooled来模拟@Stateless beans提供的容器池,通过@CallScoped来模拟调用@Stateless bean到不同的实例中的行为。

2. 更深入的Pruning(修剪)和Deprecating(弃用)

在Java EE平台中,为数众多的API可能会令初学者不知所措,这也是导致Java EE如此复杂的一个重要原因。因此从Java EE 6版本开始就引入了Pruning(修剪)和Deprecating(弃用)过程。在Java EE 7中,更多的技术和API成为了可选项,这意味着开发者如果需要,还可以包含进来。

比如我个人最喜欢的是JSF本地托管bean设施、JSP视图处理程序(这早在2009年就被弃用了),以及JSF中各种各样的功能,这些功能在规范文件中很长一段时间一直被描述为“被弃用”。

如果EJB组件模型也被修剪将会更好,但这有可能还为时过早。其实最应该做的是继续去修剪EJB 2编程模型相关的所有东西,比如在Java EE 7中依然存在的home接口。

3. 一个标准化的缓存API

JCache缓存API原本将包含在Java EE 7中,但不幸的是,该API错过了重要的公共审查的最后期限,导致其没能成为Java EE 7的一部分。

如果该规范能够在Java EE 8计划表的早期阶段完成,就有可能成为Java EE 8的一部分。这样,其他一些规范(如JPA)也能够在JCache之上重新构建自己的缓存API。

4. 所有管理对象(administrative objects)的应用内替代品

Java EE中有一个概念叫“管理对象(administrative objects)”。这是一个配置在AS端而不是在应用程序本身中的资源。这个概念是有争议的。

对于在应用服务器上运行许多外部程序的大企业而言,这可以是一个大的优势——你通常不会想去打开一个外部获得的应用程序来改变它连接的数据库的相关细节。在传统企业中,如果在开发人员和操作之间有一个强大的分离机制,这个概念也是有益的——这可以在系统安装时分别设置。

但是,这对于在自己的应用服务器部署内部开发的应用程序的敏捷团队来说,这种分离方式是一个很大的障碍,不会带来任何帮助。同样,对于初学者、教育方面的应用或者云部署来说,这种设置也是非常不可取的。

从Java EE 6的@DataSourceDefinition开始,许多资源(早期的“管理对象”)只能从应用程序内部被定义,比如JMS Destinations、email会话等。不幸的是,这并不适用于所有的管理对象。

不过,Java EE 7中新的Concurrency Utils for Java EE规范中有明确的选项使得它的资源只针对管理对象。如果在Java EE 8中,允许以一个便携的方式从应用程序内部配置,那么这将是非常棒的。更进一步来说,如果Java EE 8中能够定义一种规范来明确禁止资源只能被administrative,那么会更好。

5. 综合的现代化的安全框架

在Java EE中,安全一直是一个棘手的问题。缺乏整体和全面的安全框架是Java EE的主要缺点之一,尤其是在讨论或评估竞争框架(如Spring)时,这些问题会被更多地提及。

并不是Java EE没有关于安全方面的规定。事实上,它有一整套选项,比如JAAS、JASPIC、JACC、部分的Servlet安全方面的规范、部分EJB规范、JAX-RS自己的API,甚至JCA也有一些自己的安全规定。但是,这方面存在相当多的问题。

首先,安全标准被分布在这么多规范中,且并不是所有这些规范都可以用在Java EE Web Profile中,这也导致难以推出一个综合的Java EE安全框架。

第二,各种安全API已经相当长一段时间没有被现代化,尤其是JASPIC和JACC。长期以来,这些API只是修复了一些小的重要的问题,从来没有一个API像JMS 2一样被完整地现代化。比如,JASPIC现在仍然针对Java SE 1.4。

第三,个别安全API,如JAAS、JASPIC 和JACC,都是比较抽象和低层次的。虽然这为供应商提供了很大的灵活性,但是它们不适合普通的应用程序开发者。

第四,最重要的问题是,Java EE中的安全机制也遭遇到了“管理对象”中同样的问题。很长一段时间,所谓的Java EE声明式安全模型主要认证过程是在AS端按照供应商特定方式来单独配置和实现的,这再次让安全设置对于敏捷团队、教育工作者和初学者来说成为一件困难的事。

以上这些是主要的问题。虽然其中一些问题可以在最近的Java EE升级中通过增加小功能和修复问题来解决。然而,我的愿望是,能够在Java EE 8中,通过一个综合的和现代化的安全框架(尽可能地构建在现有安全基础上)将这些问题解决得更加彻底。

6. 平台范围内的配置

Java EE应用程序可以使用部署描述文件(比如web.xml)进行配置,但该方法对于不同的开发阶段(如DEV、BETA、LIVE等)来说是比较痛苦的。针对这些阶段配置Java EE应用程序的传统的方法是通过驻留在一个特定服务器实例中的“管理对象”来实现。在该方法中,配置的是服务器,而不是应用程序。由于不同阶段会对应不同的服务器,因此这些设置也会随之自动改变。

这种方法有一些问题。首先在AS端的配置资源是服务器特定的,这些资源可以被标准化,但是它们的配置肯定没有被标准化。这对于初学者来说,在即将发布的应用程序中进行解释说明比较困难。对于小型开发团队和敏捷开发团队而言,也增加了不必要的困难。

对于配置Java EE应用程序,目前有很多可替代的方式,比如在部署描述符内使用(基于表达式语言的)占位符,并使部署描述符(或fragments)可切换。许多规范已经允许指定外部的部署描述符(如web.xml中可以指定外部的faces-config.xml文件,persistence.xml中可以指定外部的orm.xml文件),但是没有一个统一的机制来针对描述符做这些事情,并且没有办法去参数化这些包含的外部文件。

如果Java EE 8能够以一种彻底的、统一平台的方式来解决这些配置问题,将再好不过了。似乎Java EE 8开发团队正在计划做这样的事情。这将会非常有趣,接下来就看如何发展了。

结论

Java EE 8目前尚处于规划初期,但愿上面提到的大多数特性能够以某种方式加以解决。可能“无处不在的CDI”的几率会大一些,此方面似乎已经得到了很大的支持,且事情已经在朝着这个方向发展了。

标准化缓存API也非常有可能,它几乎快被包含在Java EE 7中了,但愿其不会再次错过规范审查的最后期限。

此外,“现代化的安全框架”这一特性已经被几个Java EE开发成员提到,但是此方面工作尚未启动。这可能需要相当大的努力,以及大量其他规范的支持,这是一个整体性问题。顺便说一句,安全框架也是Antonio Goncalves关于Java EE 7愿望清单中的第4个提议,希望Java EE 8可以解决这一问题。