gartner十大战略性技术分析如下:

1. 移动设备战争

移动设备多样化,Windows仅仅是IT需要支持的多种环境之一,IT需要支持多样化环境。

2. 移动应用与HTML5

HTML5将变得愈发重要,以满足多元化的需求,以满足对安全性非常看重的企业级应用。

3. 个人云

个人云将把重心从客户端设备向跨设备交付基于云的服务转移。

4. 企业应用商店

有了企业应用商店,IT的角色将从集权式规划者转变为市场管理者,并为用户提供监管和经纪服务,甚至可能为应用程序专家提供生态系统支持。

5. 物联网

物联网是一个概念,描述了互联网将如何作为物理实物扩展,如消费电子设备和实物资产都连接到互联网上。

6. 混合型IT和云计算

打造私有云并搭建相应的管理平台,再利用该平台来管理内外部服务

7. 战略性大数据

企业应当将大数据看成变革性的构架,用多元化数据库代替基于同质划分的关系数据库。

8. 可行性分析

大数据的核心在于为企业提供可行的创意。受移动网络、社交网络、海量数据等因素的驱动,企业需要改变分析方式以应对新观点

9. 内存计算

内存计算以云服务的形式提供给内部或外部用户,数以百万的事件能在几十毫秒内被扫描以检测相关性和规律。

10. 整合生态系统

市场正在经历从松散耦合的异构系统向更为整合的系统和生态系统转移,应用程序与硬件、软件、软件及服务打包形成整合生态系统。

结合应用实践及客户需求,可以有以下结论:

1. 大数据时代已经到来

物联网发展及非结构化、半结构化数据的剧增推动了大数据应用需求发展。大数据高效应用是挖掘企业数据资源价值的趋势与发展方向。

2. 云计算依旧是主题,云将更加关注个体

云计算是改变IT现状的核心技术之一,云计算将是大数据、应用商店交付的基础。个人云的发展将促使云端服务更关注个体。

3. 移动趋势,企业应用商店将改变传统软件交付模式

Windows将逐步不再是客户端主流平台,IT技术需要逐步转向支持多平台服务。在云平台上构建企业应用商店,逐步促成IT的角色将从集权式规划者转变为应用市场管理者

4. 物联网将持续改变工作及生活方式

物联网将改变生活及工作方式,物联网将是一种革新的力量。在物联网方向,IPV6将是值得研究的一个技术。

未来企业IT架构图如下:

架构说明:

1.应用将被拆分,客户端将变得极简,用户只需要关注极小部分和自己有关的内容,打开系统后不再是上百个业务菜单。

2.企业后端架构将以分布式架构为主,大数据服务能力将成为企业核心竞争力的集中体现。

3.非结构化数据处理及分析相关技术将会得到前所未有的重视。

受个人水平有限,仅供参考,不当之处,欢迎拍砖!

http://blog.csdn.net/sdhustyh/article/details/8484780

应用服务器Apache企业应用XMLC

简介

James 是一个企业级的邮件服务器,它完全实现了smtp 和 pops 以及nntp 协议。同时,james服务器又是一个邮件应用程序平台。James的核心是Mailet API,而james 服务齐是一个mailet的容器。它可以让你非常容易的实现出很强大的邮件应用程序。James开源项目被广泛的应用于与邮件有关的项目中。你可以通过它来搭建自己的邮件服务器。我们可以利用Mailet API,编程接口来实现自己所需的业务。James集成了Avalon 应用程序框架以及Phoenix Avalon 框架容器。Phoenix为james 服务器提供了强大的支持。需要说明的是Avalon开源项目目前已经关闭。

快速上手

安装james

我这次使用的安装包是james 2.3.1。大家可以从这里下载到http://james.apache.org/download.cgi

现在让我们开始我们激动人心的james之旅。首先我们将james-binary-2.3.1.zip解压缩下载到你的安装目录。我们可以把这个过程理解为安装的过程。我在这里将它解压到c:\.并且把它改名为james.这样我们的james就安装好了。目录为C:\james。很简单吧!

准备知识 - 学习一些必要的知识

在我使用james的时候让我感觉首先理解james的应用程序结构是很重要的。否则你会在使用中感到很困惑。

它的应用程序结构是这样的:

James

|_ _apps

|_ _bin

|_ _conf

|_ _ext

|_ _lib

|_ _logs

|_ _tools

我们重点介绍一下两个文件夹bin 和 apps.

bin目录中的run.bat和run.sh是James的启动程序。只要记住这个重要文件就可以。

启动之后控制台显示如下:

Using PHOENIX_HOME: C:\james

Using PHOENIX_TMPDIR: C:\james\temp

Using JAVA_HOME: C:\j2sdk1.4.2_02

Phoenix 4.2

James Mail Server 2.3.1

Remote Manager Service started plain:4555

POP3 Service started plain:110

SMTP Service started plain:25

NNTP Service started plain:119

FetchMail Disabled

Apps 目录下有个james的子目录这个目录是它的核心。

james

|_ _SAR-INF

|_ _conf

|_ _logs

|_ _var

|_mail

|_address-error

|_error

|_indexes

|_outgoing

|_relay-denied

|_spam

|_spool

|_nntp

|_....

…

|_users

SAR-INF 下有一个config.xml是james中的核心配置文件。

Logs 包含了与james有关的Log。调试全靠它了。

Var 包含了一些文件夹通过它们的名字我们大概也能猜测出它们的用途。Mail主要用于存储邮件。nntp主要用于新闻服务器。Users用于存储所有邮件服务器的用户。也就是邮件地址前面的东东。如:pig@sina.com的pig就是所谓用用户。

创建用户:

我们在James上建若干用户,用来测试收发邮件。当然如果你不用james本身的用户也可以。James以telnet 的方式提供了接口用来添加用户。下面我来演示一下。

首先使用telnet来连接james的remote manager .

1.telnet localhost 4555 回车

2.然后输入管理员用户名和密码(user/pwd : root/root 是默认设置这个可以在config.xml中修改)

JAMES Remote Administration Tool 2.3.1

Please enter your login and password

Login id:

root

Password:

root

Welcome root. HELP for a list of commands

3.添加用户

adduser kakaxi kakaxi

User kakaxi added

Adduser mingren mingren

User mingren added

4.查看添加情况

listusers

Existing accounts 2

user: mingren

user: kakaxi

得到上面的信息说明我们已经添加成功。

发送器

这个类主要用来测试我们的邮件服务器,可以不用将其打入包中。

java 代码

package com.paul.jamesstudy;

import java.util.Date;

import java.util.Properties;

import javax.mail.Authenticator;

import javax.mail.Message;

import javax.mail.PasswordAuthentication;

import javax.mail.Session;

import javax.mail.Transport;

import javax.mail.internet.InternetAddress;

import javax.mail.internet.MimeMessage;

public class Mail {

private String mailServer, From, To, mailSubject, MailContent;

private String username, password;

private Session mailSession;

private Properties prop;

private Message message;

// Authenticator auth;//认证

public Mail() {

// 设置邮件相关

username = "kakaxi";

password = "kakaxi";

mailServer = "localhost";

From = "kakaxi@localhost";

To = "mingren@localhost";

mailSubject = "Hello Scientist";

MailContent = "How are you today!";

}

public void send(){

EmailAuthenticator mailauth =

new EmailAuthenticator(username, password);

// 设置邮件服务器

prop = System.getProperties();

prop.put("mail.smtp.auth", "true");

prop.put("mail.smtp.host", mailServer);

// 产生新的Session服务

mailSession = mailSession.getDefaultInstance(prop,

(Authenticator) mailauth);

message = new MimeMessage(mailSession);

try {

message.setFrom(new InternetAddress(From)); // 设置发件人

message.setRecipient(Message.RecipientType.TO,

new InternetAddress(To));// 设置收件人

message.setSubject(mailSubject);// 设置主题

message.setContent(MailContent, "text/plain");// 设置内容

message.setSentDate(new Date());// 设置日期

Transport tran = mailSession.getTransport("smtp");

tran.connect(mailServer, username, password);

tran.send(message, message.getAllRecipients());

tran.close();

} catch (Exception e) {

e.printStackTrace();

}

}

public static void main(String[] args) {

Mail mail;

mail = new Mail();

System.out.println("sending");

mail.send();

System.out.println("finished!");

}

}

class EmailAuthenticator extends Authenticator {

private String m_username = null;

private String m_userpass = null;

void setUsername(String username) {

m_username = username;

}

void setUserpass(String userpass) {

m_userpass = userpass;

}

public EmailAuthenticator(String username, String userpass) {

super();

setUsername(username);

setUserpass(userpass);

}

public PasswordAuthentication getPasswordAuthentication() {

return new PasswordAuthentication(m_username, m_userpass);

}

}

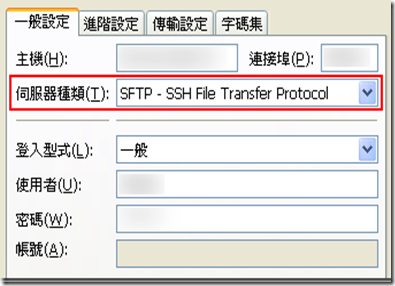

因为最近的一个项目的客户需要使用SFTP给我放传送文件,公司的服务器都是WINDOWS 2003或者WINDOWS 2008,我必须找到一个免费的(这点很重要),好用的,稳定的SFTP服务端软件。

经过一番苦寻,找到了以下几款:

1. OpenSSH

参考文章:《Windows下用sftp打造安全传输》

OpenSSH 安装起来很快速,然后就是在CMD命令行里做若干设置,但是不知道哪里没做好,用CUTEFTP-SFTP客户端访问总是报错,折腾了一小会索性放弃,没有图形界面的东西,还是有点不太习惯,反正我也不是LINUX爱好者。

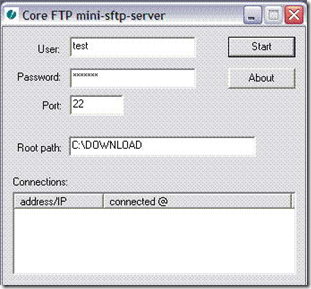

2.Core FTP Mini-Sftp Server

华军下载链接:http://www.onlinedown.net/soft/75991.htm

Core FTP Mini-Sftp Server是个绿色软件,什么都不用设置,下载后就一个exe程序,运行它,软件界面简单的吓人,就2个按钮:Start和About,随便写个用户名和密码点start就大功告成了,从客户端访问很正常。缺点是太不像个服务器软件了,呵呵,不能以系统服务方式运行,这要不小心关闭了或者机器重启后忘记打开它了就麻烦了,而且支持1个用户,遂放弃。

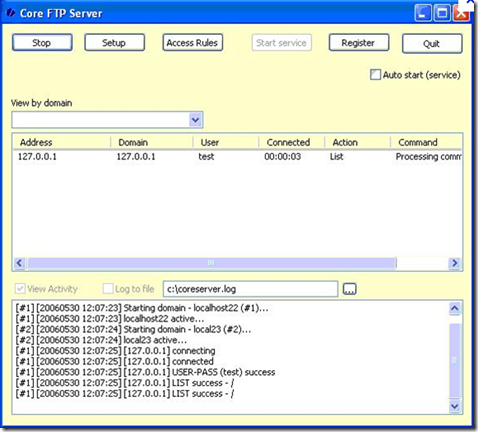

3. CoreFTPServer

官方网站: http://www.coreftp.com/server/

我想既然有Core FTP Mini-Sftp Server,它就一定有高级的、完整的版本,google一搜,还真找到了,貌似还是免费的,去下载了最新版,在服务器上安装运行,图形界面设置比较丰富,功能比较多,在setup中试着建立了一个domain,从SFTP客户端访问正常。

缺点:免费版只支持一个域名和2个用户访问,最便宜的标准版的收费是一年50美元,还是算了,咱穷啊!

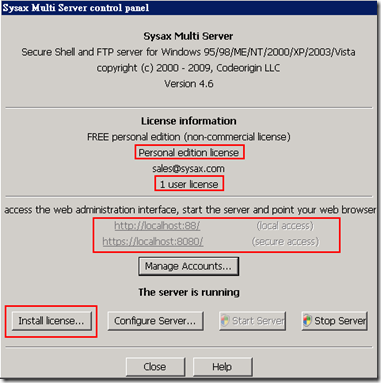

4.Sysax Multi Server

Sysax Multi Server也提供了免费功能受限版,它非常容易安裝,也提供了 GUI设置 介面,管理起来很方便,对WINDOWS 2008支持也很好,不像CYGwin就不支持WIN08。

载后记得先到 Buy a License for Sysax Multi Server or Sysax FTP Automation 页面点选 Request Personal Edition License for Sysax Multi Server 按钮申请一组免费的序号。

虽然有个人免费版,但功能限制还蛮多的,例如同时只能一个人连线、不支援 Web-based 远端管理、…等等,还真的是名符其实的「个人」版,但是该有的功能一个都没少,可以方便你安全的传文件。 考虑后今后的多用户使用情况,还是放弃了。

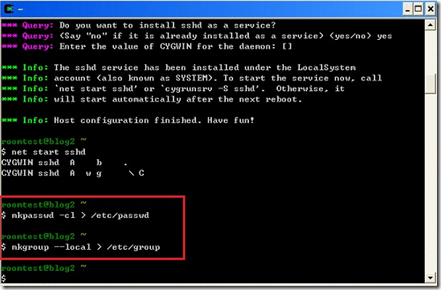

5. Cygwin下安装SFTP Server

参考文章:http://hi.baidu.com/www100/blog/item/e985c717e656b601c93d6d10.html

http://blog.csdn.net/ezdevelop/article/details/67936

Cygwin 是 Windows 上类似于 Linux 的环境。它包括一个提供 UNIX 功能性基本子集的 DLL 以及在这之上的一组工具。安装好 Cygwin 之后,通常可以忽略它,即使您是命令行的爱好者,您仍能发现您活得更舒坦了。

看了一下文档,感觉安装和使用上都不是很方便,没有亲自下载安装测试。略过它了。

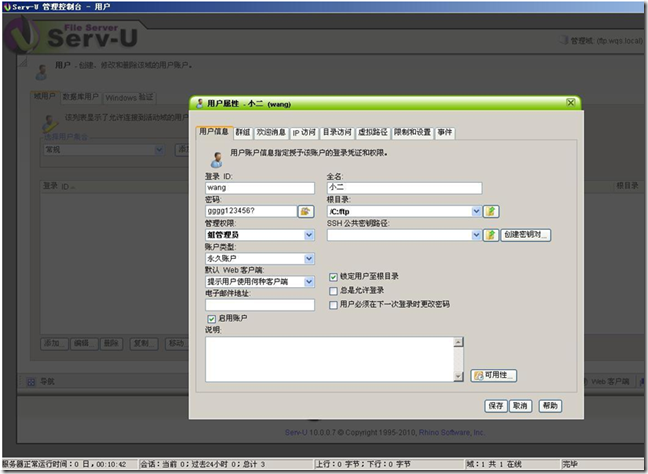

6. Serv-U

国内的管理员们用的最多的FTP服务器软件,能够支持多种FTP服务,包括Ftp,SFtp,Ftps等等,功能非常强大,破解版非常给力,不介意破解版的朋友可以使用它。

但是我本人还是不太喜欢在服务器上运行破解软件和注册机等程序,另外我的服务器放在国外,对知识产权比较敏感,所以放弃了Serv-U

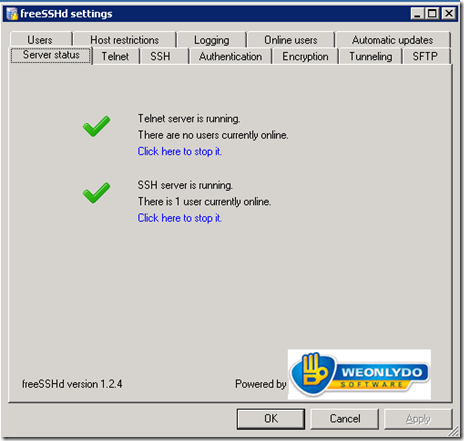

7. freeSSHd

官方网站下载:http://www.freesshd.com

众里寻他千百度,千呼万唤始出来!这是我安装试用了freeSSHd后发出的感叹,此软件免费,功能非常丰富且强大,同时支持软件用户、本地系统用户和域用户验证,对各用户选择性开放SFTP,Telnet, Tunneling服务,所以功能和服务完全不受限制的使用,总之太棒了,一款免费软件做的比收费软件还要强大,强烈推荐。

这里有一篇博文详细介绍了FreeSSHD的使用:http://blog.163.com/ls_19851213/blog/static/531321762009815657395/

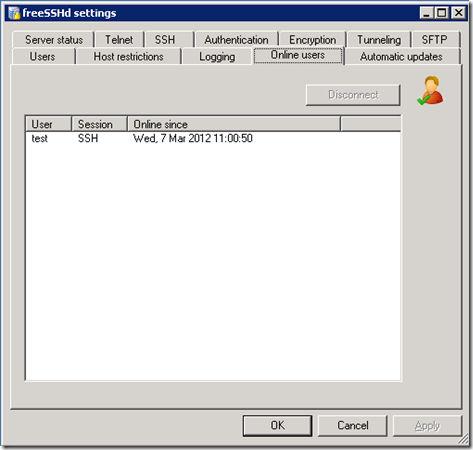

freeSSHd截图:

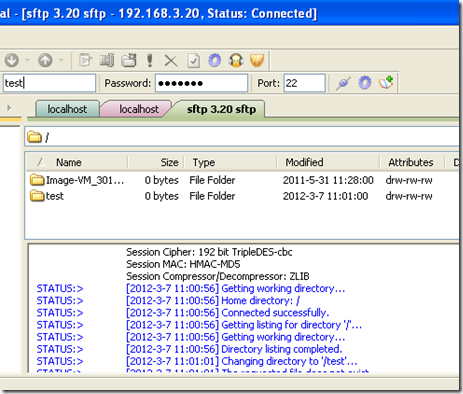

Cuteftp 客户端截图:

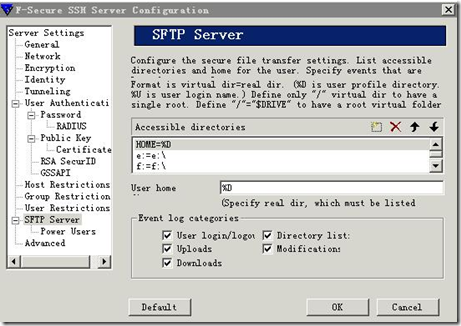

8.F-Secure SSH Server

F-Secure SSH server是一款商业性质的SSH服务程序,也有免费限制版本,因为有了freeSSHd,就偷懒了没有去试用F-Secure SSH Server,抱歉。

在中国有很多人都认为IT行为是吃青春饭的,如果过了30岁就很难有机会再发展下去!其实现实并不是这样子的,在下从事.NET及JAVA方面的开发的也有10年的时间了,在这里在下想凭借自己的亲身经历,与大家一起探讨一下。

明确入行的目的

很多人干IT这一行都冲着“收入高”这一点的,因为只要学会一点HTML, DIV+CSS,要做一个页面开发人员并不是一件难事,而且做一个页面开发人员更容易找到工作,收入比普通的工作还要高一些,所以成为了很多高校毕业生的选择。如果您只是抱着这样一个心态来入行的话,那阁下可真的要小心了。因为干IT这一行竞争本来就比较激烈,特别是页面设计这方面,能够开发的人很多,所以为了节省成本,大部分公司都会在需要的时候才招聘这类人员;在没有订单的时候,一些小公司还可能找各类的借口或者以降薪的手段去开除这类员工。而在招聘信息上常常会看到“招聘页面设计师,条件:30岁以下……欢迎应届毕业生前来应聘”这样一条,因为这一类工员对技术上的要求并不高,找应届生可以节约成本。所以在下觉得“IT行业是吃青春饭的”这句话只是对着以上这类人所说的,如果阁下缺乏“进取之心”,而只抱着“收入高,容易找工作”这样的态度而入行,那“IT行业是吃青春饭”将会应验了。

选择合适的工具

JAVA、C#、PHP、C++、VB……10多种热门的开发语言,哪一种最有发展潜力呢?其实开发语言只不过是一个工具,“与其分散进攻,不如全力一击”,无论是哪一种开发语言,只要您全力地去学习,到有了一定的熟悉程度的时候,要学习另一种的语言也是轻而易举的事情。开发语言主要分为三大类:

1. 网络开发

现在网络已经成为世界通讯的一座桥梁,好像Javascript、PHP、Ruby这几类开发语言大部分是用作网络开发方面。

2. 企业软件开发

JAVA、C#、VB这几类开发语言都实现了面向对象开发的目标,更多时候用于企业系统的开发。

3. 系统软件

C语言、C++、Objective-C这些软件更多是用在系统软件开发,嵌入式开发的方面。

当然,这分类不是绝对,像JAVA、C#、VB很多时候也用于动态网站的开发。在很开发项目都会使用集成开发的方式,同一个项目里面使用多种开发语言,各展所长,同步开发。但所以在刚入门的时候,建议您先为自己选择一种合适的开发工具,“专注地投入学习,全力一击”。

明确发展方向

当您对某种开发语言已经有了一定的了解,开始觉得自己如同“行尸走肉”,成为一个开发工具的时候,那您就应该要明确一下自己的发展方向了。

平常在公司,您可以看到做UI层的开发人员大多数都有20多岁,他们充满干劲,而且没有家庭负担,在两年前ASP.NET MVC 、Silverlight等刚出现的时候,他们可以在晚上回家的时候买几本书或者直接上网看看,研究三五个星期以后,对需要用到的技术就已经有一定的了解了。而年过30的人多数是已经成家了,他们每天9:00点上班唯一的希望就是快些到6:00点,能回家吃饭。吃完饭只想陪孩子玩一下,看看孩子的功课,对新增的技术缺乏了学习的欲望。所以很多接近30岁的程序员都有着一种逼迫感(包括30岁时候的我自己),再过几年应该怎么办?这时候,您就更应该明确一下目标,努力向自己的发展方向前进了。归纳一下,可从下面几项里选择适合自己的一条道路:

1. 从技术向业务过渡

在国外,很多发达国家都很重视人才,一个高级的程序员与一个Project Manager收入相差一般不超过15%。但中国是世界上人口最多的国家,国内人才众多,所以人才滥用的情况经常可以看到。一个小公司的开发部里面经常会见到新面孔,但PM却不会常换。因为做老板的对技术是一窍不通,依他们看来只到拉住PM的心,那技术方面方面就能搞得定,至于技术部要换人,他们根本不需要费力气去管。所以从一个技术员过渡到一个PM是向前发展的一个选择,但开发人员也需要知道,要成为一个PM不单单是使用技术,而更重要的是对管理方面的认识。一个PM主要的工作是组织团队,控制成本,管理业务,控制项目进度,与客户进行沟通,协调工作,定期进行工作报告等。所以要成为一个成功的PM更要重视组织能力,PM必须能提高团队的积极性,发挥团队所长,在有限的开发资源前提下为公司得到最大程度上的利润。成为一个PM后,通常不需要直接接触技术开发,而着重管理的是业务发展,但PM对技术也需要有一定的了解(在下曾经为PM对技术了解的必要性写过一篇文章,得到很多支持但也惹来不少的争议)。在这里我还是要强调自己的观点:要成为一个成功的PM最重视的是管理能力,但对技术也应该有足够的了解,因为这是与团队成员沟通的桥梁,只有这样才能与整个团队的成员有着紧密的结合,让团队成员感觉到他们自己存在的意义,从而调动团队的积极性,而不是漠视技术人员的存在。技术并非成为一个成功PM的充分条件但却是必要条件!

2. 从程序员向技术管理发展

其实一个Team Leader的职责与Project Manager相像,但Team Leader更着重于技术开发方面,通常一个大型项目都会有一两个开发团队由Team Leader带领,负责开发核心部分,而其它部分分派给不同开发小组或者分派给外包公司。在网上常看到几句话,贴切地形容了PM与TL的区别:“技术人员乐于被领导;但他们不喜欢被管理,不喜欢像牛一样被驱赶或指挥。管理者强迫人们服从他们的命令,而领导者则会带领他们一起工作。管理是客观的,没有个人感情因素,它假定被管理者没有思想和感受,被告知要做什么和该如何做。领导是引领、引导,它激励人们达成目标。领导力是带有强烈个人感情色彩的,它不是你能命令的,也不是你能测量评估和测试的。”

无论是PM与TL,对业务与技术都要有深入的了解,只是PM更侧重于业务的管理,盈利的多少,风险的大小等等,而TL则侧重于项目的成本,开发的难度,软件的架构等技术方面的问题。在某些人眼中,技术与管理就像鱼与熊掌,不可兼得,但依在下看来,两者却是秤不离砣,密不可分。只要及时提升自己对技术与管理的认识,不断地向深一层发展,要从程序员提升到技术管理人员只是时间的问题。打个比方,一个普通的.NET程序员,开始可能限制于ASP.NET的页面开发,但一旦他有了发展之心,他自然会对ASP.NET MVC、Silverlight、WinForm、WPF这些UI的开发手法感到兴趣,学习不需要多少时间,他可能就会认识这些UI开发只不过是一些工具,其实在开发原理上没什么区别。接着他就会向深一层的通讯模式进行了解,认识TCP/IP、Web Service、WCF、Remoting这些常用到的通讯方式,这时候他可能已经感觉到自己对开发技术有了进一步的了解。进而向工作流、设计模式、面向对象设计、领域驱动设计、面向服务开发等高层次进发,最后成为技术的领导者。上面只是一个比喻,但要注意的是,在学习的时期必须注意的是与同事之间沟通,很多的开发人员喜欢独来独往,开发的项目总想一个人搞定,不受外界的干扰。但要明白,就算你有天大的本事,一项大型的项目也不可能由你一个人全扛着。所以团队的合作性与同事间的沟通是必要的,这也是成功一个TL的必要条件。

3. 单方面向技术发展

能成功进行技术开发的尖端人才,这是在下最向往的工作,却也没本事登上这个位置。很多从事开发的人都会认为,业务总会带着“金钱的味道”,老板从来不管开发是否合符开发原则,是否经过必要测试,他们只会在客户面前无尽地吹嘘,项目到期能成功交货,只要不出什么大问题那这个项目就算成功了。其实我们也要明白:开发项目最终目标是为了赚钱,在开发过程中对项目成本的限制和效率的控制这也是必须,所以这才需要管理人员对项目进行管理。但开发人员也很想避开这“金钱的尘嚣”,全心投入到技术的世界当中。所以对技术有着浓厚兴趣的人,往往会深入地研究某一项技术,成为技术上的精英。但在这里说一句令人心淡的话:中国已经属于是世界上第二大经济体同盟国,但国民生产总值主要来源于第三方加工产业方面。中国可以说是人才济济,但却在高新产业上却比发达国家落后。这几年的确看到我们国家在高新科技上有着质的飞跃,但跟欧美发达国家还有着一段距离。所以想在中国成为尖端技术的人才,无可否定比在国外要难。依在下看来,要想成为尖端的开发者,必须对C、C++、汇编语言、嵌入式开发、Windows API、Linux API这些底层技术有着深入的了解。要知道解JAVA、.NET……等这些之所以称为高级开发语言,并不是指它们比C、C++、汇编语言更高级,而是指它们封装了C、C++等等的功能,更适合用于企业软件的开发,使开发变得简单。但如果要开发一些底层的软件,大型的系统的时候,就必须用到C、C++、汇编等开发语言,这是成功尖端人才的一个条件。

确定未来的目标

人是从历练中成长的,古人云:三十而立,形容的不是一个人的社会地位,经济来源,而是形容一个人对未来的目标,对人生的意向。要成为一个成功人,就应该早日为自己定下长期的发展目标,作为一个开发者也当如此。随着人的性格,取向各有不同,大家为自己所选择的路也有不同:

1. 自立门户,勇敢创业

快30岁了,很多人会认为要想真正赚得了钱,就应该自立门户,为自己创业建立一个基础。像北京、上海、广州这些一级城市,要买房子,一手楼基本要在2万~4万元/平方米左右,而在一家普通的IT公司当上一个项目经理,基本收入一般都在1.5万~3万之间(除非在大型的跨国企业内工作,那另当别论),要买一间100平方米左右的房子,就算不吃不喝也几乎要10年的年薪,所以选择自主创业,是很多IT开发人员的一个未来目标,想要达到这个目标,就应该更多地把业务作为重点。不可否认的一件事,在中国社会里很多时候讲的是“关系”,即使这30年的改革开放使中国的经济蓬勃地发展起来,但几千年来留下的歪风还是不能完全的磨灭。所以想要创业的人事建议你要多跟客户打好关系,与合作伙伴保持互利互动的模式,这将有利于日后事业的发展。

2. 急流勇退,退居二线

这也是不少人的选择。很多人在有了家庭以后,感觉到压力太大,人的一生并非只有事业,他们想把更多时间用于对亲人的照顾,对孩子的关心上。所以很多人会选择一份像系统分析、系统维护、高校教师、专业学院讲师这一类的工作。收入稳定,而且往往没有一线开发人员那么大的压力。

3. 不懈努力,更进一步

无论你是一个Project Manager或者是Team Leader,如果你想继续晋升一级,那还是会两极分化的。从一个PM到一间公司的管理层,那所面对的事件会有很多变化。一个公司的总经理,要管理的不再是一到两个项目的成本,而是整个部门的运作,整间公司的业务流程,所以要肩负的任务会更重。在下曾经有一位上司彭博士,他是企业的最高领导人,年薪超过三百万,而且在报纸杂志上也曾经亮过相。平常只会在某些会议上轻轻地亮下相,说两句讲词,平常的公司运作与业务管理都不需要他直接执行。这并不是说一个作为管理层很清闲,因为他们要面对的是更多的社会关系,与公司合作企业的联系上。这跟一个PM的工作有很大的区别,所以要从一个PM晋升到管理层,那可是要付出更多的努力与汗水。

如果要从Team Leader上升为一个技术总监,那工作的方向也有所改变。像之前所说:一个TL可能更重视的是技术层面,讲求与团队之间的互动合作性,更注重的是开发的完善。而一个技术总监就无需要直接参加某个项目的开发,而注意的是开发的效率与成果,如何合理使用有限的开发资源,控制开发的风险和可能带来的效果。

发展感受

经历了8年多时间,在下从一个程序员到一个项目经理,之间经过很多的曲折,但因为每一个人的际遇都有所不同,所走的路也有不同,正所谓条条大路通罗马,成功的路不止一条,在下也不想令各位误解,而只想为大家说一下我的发展方向。如果您是一位开发人员,“程序员->架构师->Team Leader(Project Manager)->技术总监”是一条不错路,这也是在下选择的路。在我国,想要进一步提升自己,无论你想是以技术为重点还是以业务为重点,都离不开管理二字。在一些大型的企业,一个团队往往会配备一个PM与一个架构师,尽管两个人负责的任务各有不同,但你会看到一个架构师的收入往往不如一个PM,PM往往是这个团队的核心领导者,是关键人物。因为公司能否赚钱,PM有着重要的作用。PM与TL并没有绝对的区别,而且在一些中小型企业,一个开发团队只有3~5人,一个TL往往会兼备业务处理、成本控件、架构设计、开发管理等多项任务。所以在下会把Team Leader与Project Manager定于同一层次,一个公司的老板往往不会知道团队的架构师、程序员是何人,而只会向PM询问项目的进度,所以只有晋升到这个层次,才有机会进一步提升管理能力,让自己有上升的空间。至于要成为一个技术总监,那要求就不再单单是对单个项目的管理,而应该更则重于新兴技术的引用,开发资源的合理利用,对开发项目敏捷性的处理等等,对此在下也在试探当中,未敢多言。

与编程牵手 和代码共眠 从程序员到技术总监

从业IT十年,从程序员成为技术总监,现在回头看一看,这条路也伴随国内的IT一起风雨兼程10年,对IT技术由其是IT的纯软件开发这一块,向即将要从事软件技术研发的朋友谈一谈我的看法:

一.认清当前IT形势,选择合适的技术方向和技术起点

估计大家都多多少少知道,这个IT行业知识的更新很快,竞争很急烈.

如果你对自己以后发展的方向在从业前有一个清析的计划或认识,相信你会比别人走得更好,走得更远,赚的钱也更多...呵呵

IT软件从业的方向,一般都会有这些机会:产品售前(市场,业务),产品开发(编码,设计,测试),产品售后(支持,实施),产品管理(项目管理等)

A.产品售前(市场,业务)

要从事这一块的工作,主要是在软件开发的前期(无产品),或者合同签订前期(有产品).

一般要求对相关的业务和技术都要求很高,这可不仅仅是要求人际关系,交际能力.

要想别人买你的产品,你得以专业的产品品质为后台,以专业的谈吐,专业的技术和专业的业务理解能力来取胜.

从业者要求:

要求从业者要有一定的社会经验,技术经验或业务经历,或一定的社会圈子和交际能力.

建议:

刚刚从学校毕业的朋友或不符合上面条件的朋友最好要考虑清楚了.当然这世上没有什么绝对的东西,就看你自己了.

现实情况:

据我所了解的,作这一块的都会是公司一些高层(有关系,有经验)和业务专家或特殊背景的人员等.

B.产品开发(编码,设计,测试)

这一块的工作,当然是IT从业大军的主力了,但也得要考虑清楚.

如果你要作设计师,或测试,最好先作一段时间的编码,

一个好的设计师是不可能不精通相关技术平台的!

国外好的测试人员也几乎是从开发人员中选出来的,基至是软件开发高手.

a.代码编写

在这一个职业选择范围内最好是从代码编写开始.当然你也可以先作测试,看看人家是怎么写代码的是如何来作这个软件的,

借用人家的测试经验也可以,以后有机会再来编一段时间的代码也行.

有时自己去写一个软件也可以,所以作编码和测试都是一个双向交互的.而不是编码在前测试在后的.

作代码的编写最好自己先看看别人的软件,或由一些高手带着指导一下,现在技术的学习都不成问题,关健是要连成一条线来学习和思考就会有一定的局限了.

所以要熟悉整个的项目流程或业务流程不是靠个人编码或在培训班学一下就能解决的,个人的技术学习和培训班大部分只能解决技术的学习问题,但作软件不仅是要技术呀

三分技术七分业务说得不为过,业务的学习也是一个开发人员所要必备的,如果你在不熟悉业务细节之前建议你不要急着去写代码,那样肯定会是对以后软件的影响很大.先要熟悉一下业务.

所以软件开发人员掌握一门技术平台和语言是必备条件但同时也必须要有一定的业务知识,这样才是一个合格的软件开发人员.当然精通软件编码,懂设计,熟悉业务,熟悉软件项目开发流程的软件开发人员是优秀的,那是高级研发人员的必备条件.

如果你才入门或转行或刚毕业,建议从基础的代码编写开始,跟着高手或找一些成熟的项目多学习,

b.软件设计

当然这个职业要求行业的经验,技术经验都要有一定的基础,薪水一般也会高很多,所以也是一些开发人员热烈追逐的目标.但一个好的设计师不是一二年所能练就的,精通编码,熟练设计模式和公司所采用的技术平台,熟练一些设计理论并实际多运用,熟练公司业务,其实这个层面的压力也最大,一个好的软件在设计上的比重几乎要占到七成.

建议刚毕业的朋友或软件初学者不要在这一块来凑热闹,即使你作成了设计师,但在我眼中看来你也不是一个合格的设计师...当然你有这个能力来作设计师就要恭喜你了.

c.软件测试

熟练软件测试的各种理论或实际运用,也要熟悉编码技术及相关的技术平台,熟练掌握业务.

软件测试中一般都会有:

单元测试,要求你熟练开发技术进行跟踪调试,也就是白盒测试了

集成测试,对整个项目流程的测试,要求掌握业务知识,对设计的软件能作功能上的测试或压力测试等 ,属黑盒测试

确认测试,对业务要很熟悉,测试软件是否完全满足了客户的业务需求.

总体建议:

1.熟练一种技术平台,熟悉一种业务

刚入门的朋友很容易犯的一个毛病是,熟练:VB,VC,.NET,JAVA,C++,C,Dephi,PB,几乎市场上要用的他全部会,唉,如果我看到他的简历上有这么一句话,这个人肯定不会在我考虑的范围了.

现在全球用得最广最多的技术平台体系也就三大体系:

sun的J2EE技术体系(JAVA):在高安全性,高性能上更胜一步,中高端市场上用得多

微软件的技术体系(C++,.NET,c#,VB):在中,低端市场占绝对优势,也是全球个人电脑操作平台用户最多的.

CORBA技术体系统(一种分布式技术体系和标准),

全称:Common Object Request Broker Architecture:公共对象请求代理结构,可以用不同的编程语言写成,运行在不同的操作系统上,存在于不同的机器上。

一般介于底层和上层管理软件之间,

其他的还会包括底层开发:C,汇编,属纯底层的开发,当然要求技术的起点和业务背景更强,最好是学的专业:电子电气,嵌入式行业,机械制造,数据采集等...

看中你想要从事的技术体系,选好一门语言工具,好好上路吧...:)

永远要记住:你什么都想学,你什么都学不精

2.从基础入手,不要好高鹜远,眼高手低,要与实际结合

B.产品售后(支持,实施)

这一块对于开发技术的要求来讲不是那么明显,主要工作会在软件开发后的工作,跟客户打交道多,但更多要求体现在对业务的把握和客户的交际上.

有些软件产品业务比较成熟,如果参与这一阶段的工作,可以快速学习很多的业务知识,积累客户交往的经验

建议:刚入门或刚毕业的朋友,可以在这个工作上多选择,等待时机成熟,立马杀入软件的开发或设计阶段,当然,这一块的工作作得好也不容易,如果适合你作,

工作环境或工资都不错你就大可不必多想了...

C.产品管理(项目管理等)

这一块的工作主要体现在管理上,当然适合有一定经验或管理能力的人员来担当,

最后的技术从业方向总结:

技术型:先选择好一种技术平台,熟练一种开发语言和数据库...专业专注的搞几年再说

技术+管理型:如果你有一定的技术经验了,并且人际交往,管理能力不错,你就可以向这个方向发展

技术+业务型:精通一种技术平台,精通一种业务,好好搞,这种人才最受欢迎...

管理型: 如果你有一定的社会经验,从业经验,如果人际交往,管理能力还可以,老板也喜欢,就搞这个

业务型(市场):如果你对业务很感兴趣,跟客户的交往等也不错,你可以选择了,有适合的专业技术就更能锦上添花了

技术+市场+管理:老大的位置....:)